What is Conversion Rate Optimization? Full Guide

What is conversion rate optimization (CRO)? Learn its process, key metrics, and ROI to turn more website visitors into customers. Actionable guide.

Most companies don’t have a traffic problem first. They have an efficiency problem.

The ads are running. SEO is bringing in visits. Sales wants more pipeline. Finance wants better payback. Then everyone looks at the website and realizes the same uncomfortable truth: you’re already paying for attention, but too much of that attention dies in the funnel.

That’s where conversion rate optimization matters. Not as a collection of random tests. Not as a design exercise. And not as a debate about button colors.

A mature CRO program is a growth system. It finds friction, removes it, measures business impact, and repeats. For boards and leadership teams, that matters because better conversion performance changes the economics of acquisition. It can lower wasted spend, improve payback, and create more revenue from traffic you already own.

Beyond Button Colors Why CRO Is Your Growth Engine

A lot of executives hear “CRO” and think of small website tweaks. Different CTA colors. Shorter headlines. Slightly different form layouts. Those things can matter, but that view is too narrow to be useful.

What is conversion rate optimization in practice? It’s the discipline of improving the path from visit to business outcome. Sometimes that means changing a page. Sometimes it means changing the offer, the sequence, the proof, the checkout flow, or the way paid traffic matches landing page intent.

What boards should care about

The shallow version of CRO asks, “Did the page convert better?”

The serious version asks harder questions:

- Did CAC payback improve: More efficient conversion means the same media spend can produce more customers or pipeline.

- Did lead quality hold up: A lift in form fills that weakens sales acceptance isn’t progress.

- Did early customer value improve: Better onboarding, stronger qualification, and tighter messaging can improve downstream revenue quality.

- Did the change survive holdout thinking: If the result only looks good inside platform reporting, leadership should be skeptical.

Conversion rate is useful, but it isn’t the whole story.

That broader view matters because attribution is noisier than many teams admit. Dylan Ander argues that conversion rate alone isn’t the best indicator of success, and recommends holdout testing, CAC payback windows, and 60-day LTV as stronger measures of performance, especially when platform attribution from Meta becomes less reliable (YouTube discussion).

What works and what doesn’t

What works is a repeatable operating model. Research first. Test second. Judge outcomes against business metrics, not vanity wins.

What doesn’t work is running disconnected experiments with no prioritization. Many teams also over-focus on visual polish while ignoring messaging clarity, offer structure, trust, and funnel continuity.

If you want a practical companion list of tactical ideas, Orbit AI’s guide to 10 High-Impact Conversion Rate Optimization Tips is a useful reference. The important point is that tactics only produce durable gains when they sit inside a system.

Understanding Conversion Rate Optimization Fundamentals

At its simplest, conversion rate optimization is the process of getting more of your existing visitors to take a desired action.

That action depends on the business model. For an e-commerce brand, it may be a purchase. For SaaS, it may be a free trial or demo request. For B2B, it may be a qualified lead form.

Think of your website as a bucket you’re already paying to fill. SEO, paid search, email, and social all pour traffic into it. CRO is the work of fixing the leaks.

The basic formula

The core formula is simple:

Conversion rate = Conversions / Visitors × 100

The formula is easy. The implications are not.

A small movement in conversion rate can have an outsized commercial effect because it improves the output of traffic you already paid to acquire. The average conversion rate across industries is approximately 2.9%, and moving from 2.5% to 3.0% yields 20% more sales, which is why CRO offers such impact on revenue without requiring more traffic (SQ Magazine conversion rate optimization statistics).

Why the benchmark matters

Leadership teams often ask, “What’s a good conversion rate?”

The honest answer is that context matters. A strong e-commerce checkout flow, a SaaS homepage, and a B2B enterprise demo page are solving different jobs. The benchmark is less important than the delta between your current performance and your realistic ceiling.

Still, directional benchmarks help frame the opportunity:

- Across all industries: average conversion rate is 2.9%

- Professional services:4.6%

- Industrial:4.0%

- Automotive:3.7%

- B2B SaaS:1.1%

- Paid search:2.9%

- Organic search:2.8%

- Referrals:2.6%

- Email:2.3%

All of those figures come from the same benchmark source above, and they show why a single “good conversion rate” target is misleading.

CRO is about value capture

Many teams think about growth in terms of more traffic. CRO starts with a different question: are you extracting full value from the traffic you already have?

That shift changes budget conversations. It also changes the relationship between marketing and finance. Instead of asking for more spend before fixing conversion leaks, a CRO-led team strengthens the economics of existing acquisition first.

Board-level view: Traffic acquisition creates potential. CRO turns that potential into realized revenue.

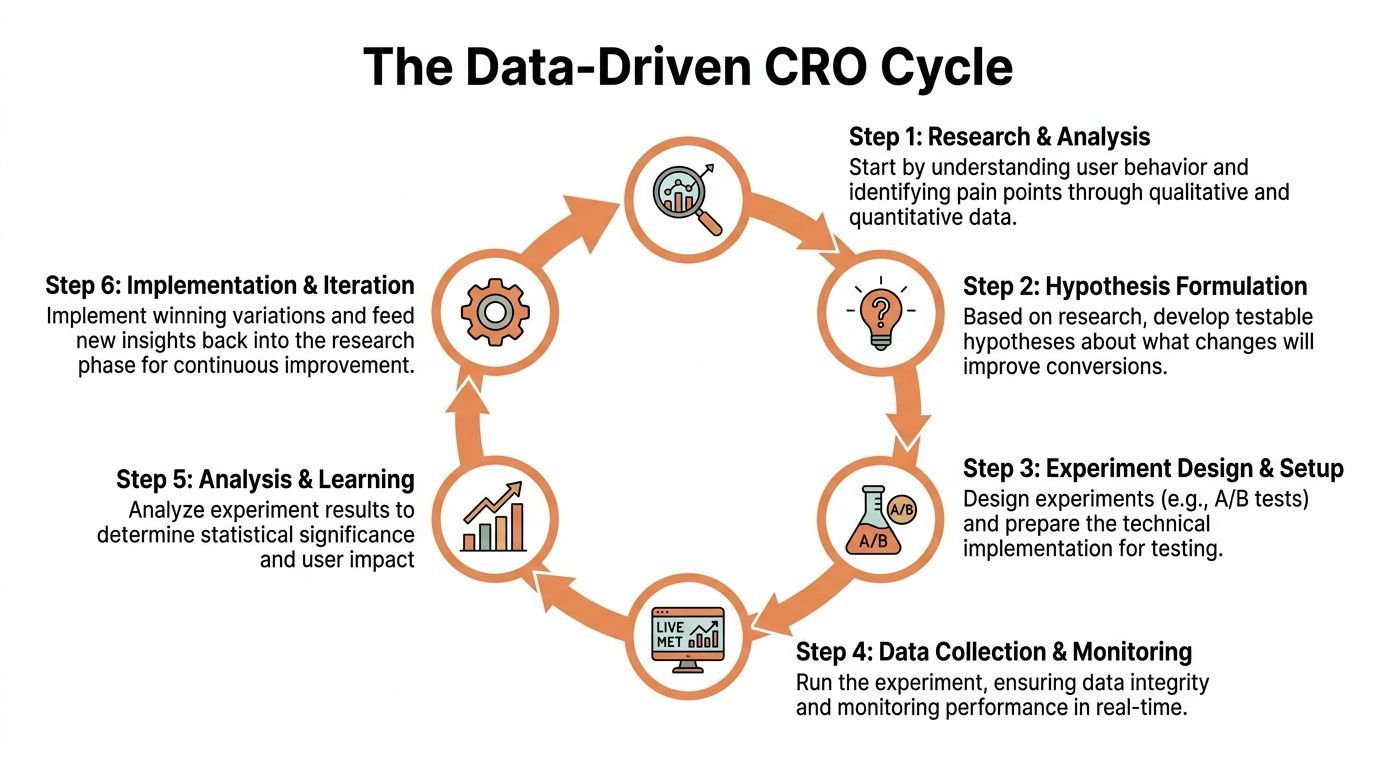

The Data-Driven CRO Process From Research to Results

Professional CRO isn’t a string of random tests. It’s an operating loop.

The strongest teams run it the same way product teams run disciplined experimentation. They gather evidence, identify friction, form hypotheses, test carefully, and turn every result into a decision.

Near the start of that loop, it helps to visualize the process clearly.

Research starts with the funnel

The first job is locating where value is being lost.

Quantitative funnel analysis in tools such as Google Analytics can reveal exact drop-off points. Heatmaps and behavior tools then add the missing layer by showing what people do on the page. According to Lucky Orange’s CRO guide, funnel analysis can surface precise leakage points, and heatmaps often expose ignored CTAs or clicks on non-interactive elements. Fixing those issues has led to 20-30% conversion lifts in documented examples (Lucky Orange conversion rate optimization guide).

That sequence matters. Analytics tells you where the problem lives. Behavioral data helps explain why.

For leaders building internal capability, strong data-driven design principles are useful because they force design and conversion decisions to start with evidence rather than taste.

What research should include

A proper research pass usually combines several inputs:

- Funnel analytics: Find where users exit between key stages.

- Heatmaps and session recordings: Observe hesitation, dead clicks, rage clicks, and ignored elements.

- Device segmentation: Separate mobile, desktop, new users, returning users, and major traffic sources.

- Voice of customer input: Pull objections from surveys, chat logs, sales calls, and support tickets.

- Commercial context: Check whether the pages under review influence revenue, trial quality, or pipeline.

Many programs falter at this point. They jump from “conversion rate feels low” straight into test ideas. That creates noise, not learning.

Practical rule: Prioritize the one to three bottlenecks affecting the highest volume of valuable traffic first.

Hypotheses need a reason, not a guess

Once research identifies a problem, the next step is not “let’s test something.” It’s to write a specific hypothesis.

A useful hypothesis sounds like this:

- If we move proof closer to the CTA, more visitors will start trials because hesitation appears strongest near the decision point.

- If we reduce form friction, more qualified leads will complete submission because replay data shows abandonment during unnecessary fields.

- If we align ad message and landing page promise more tightly, more visitors will continue because intent mismatch is causing early exits.

The quality of the hypothesis often determines the quality of the test. Weak hypotheses usually come from opinion. Strong ones come from observed behavior.

Testing should be controlled and boring

The best experimentation programs look uneventful from the outside. That’s a good sign.

They don’t chase novelty. They isolate variables, monitor tracking, and avoid changing five things at once unless they are intentionally testing a larger page concept.

This video is a useful visual explainer of how structured CRO testing works in practice.

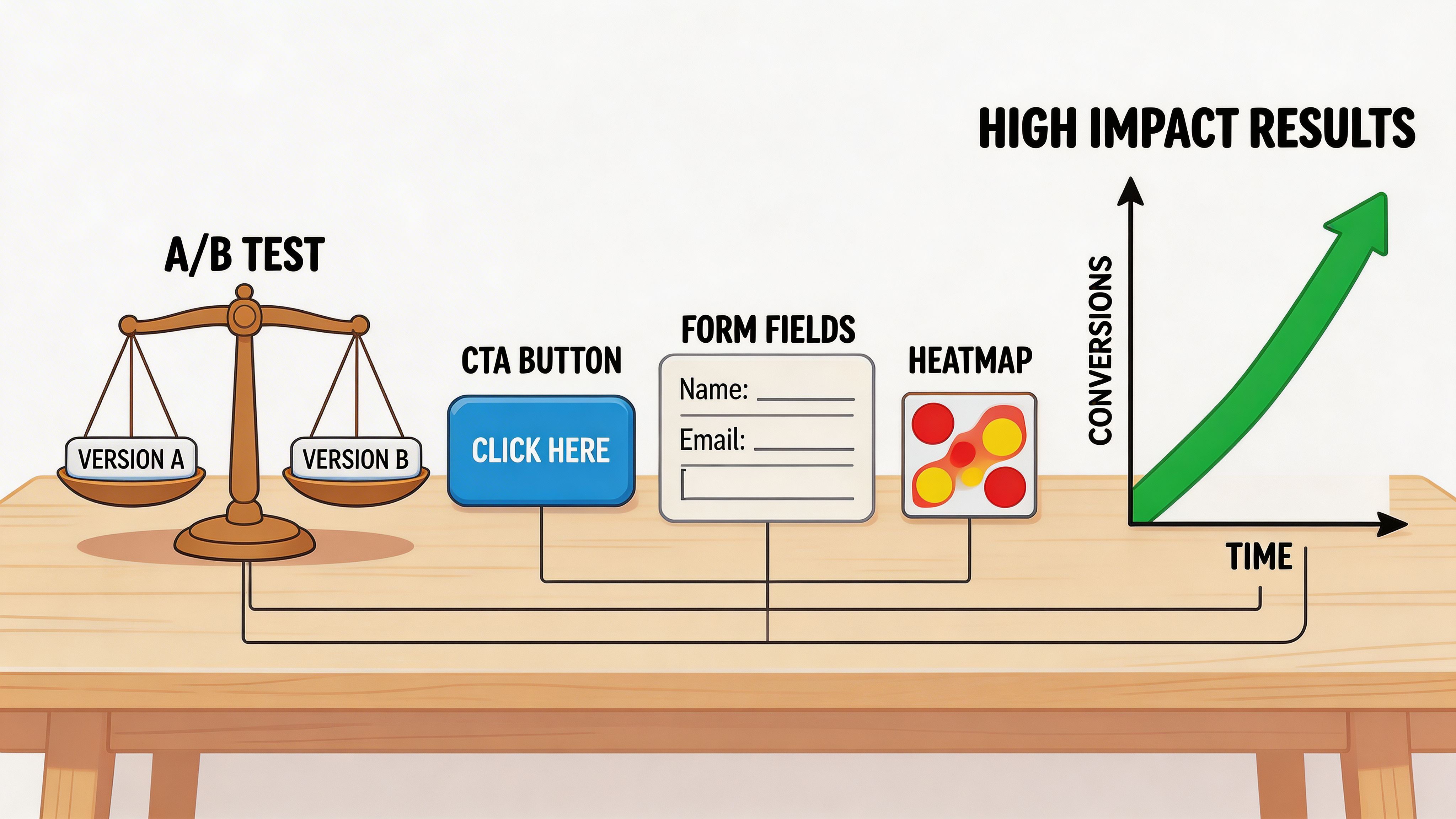

Controlled testing usually falls into three buckets:

- A/B tests: Best for validating one primary change against a control.

- Multivariate tests: More demanding, useful when traffic and implementation maturity are high enough.

- Direct implementation: Appropriate when the issue is obvious enough that testing would only delay a fix, such as broken UI, unclear error states, or a missing mobile CTA.

Learning is the product

Winning tests matter. Losing tests matter too.

An executive team should expect a mature CRO function to produce two outputs every cycle:

- Performance gains: Better conversion behavior on key pages or flows.

- Institutional knowledge: Clearer understanding of customer objections, motivations, and friction points.

That learning can influence more than the website. It often sharpens ad messaging, email sequencing, pricing communication, and sales enablement.

A strong SEO and CRO relationship is especially important. If organic acquisition is a priority, improving landing-page efficiency makes every SEO gain more valuable. This is one reason teams often pair CRO work with broader funnel analysis such as https://ezcaa.com/blog/how-to-increase-organic-traffic.

Why sprint-based CRO works

One-off tests rarely change a business. Repeated learning cycles do.

That’s why sprint-based CRO is more effective than “test whenever someone has an idea.” A sprint creates fixed time for research, prioritization, implementation, readout, and iteration. It gives leadership visibility. It also prevents endless backlogs of half-formed ideas.

The discipline is simple. Research the funnel. Find friction. Test changes that address it. Keep what works. Document what doesn’t. Repeat.

Essential CRO Metrics and the Tools to Track Them

Most reporting stacks are overloaded with metrics and short on decision-making value.

CRO works better when leadership separates core business outcomes from diagnostic signals. That distinction keeps teams from celebrating movement in secondary metrics while the core conversion path stays broken.

Macro conversions and micro conversions

Every CRO program should define both.

Macro conversions are the outcomes the business needs. Purchases, demo requests, qualified lead submissions, booked calls, trial starts, or completed checkouts.

Micro conversions are the steps that indicate intent. Product page views, pricing page visits, add-to-cart events, account starts, scroll depth on key pages, or engagement with proof elements.

The macro metric tells you whether the machine is producing value. The micro metrics tell you where the machine is slowing down.

A board doesn’t need every micro-conversion chart. The operating team does. Leadership should ask whether those micro signals map cleanly to the stages that matter commercially.

The metrics that deserve executive attention

A practical CRO dashboard usually needs these categories:

The key trade-off is this: a simple dashboard is easier to read, but an oversimplified dashboard hides the cause of underperformance.

The tools and what each one is for

A modern CRO stack usually has four layers.

Analytics tools

Google Analytics 4 is the baseline for event tracking, funnel views, and source-level performance. It answers questions like where users entered, which path they took, and where they exited.

Use analytics to map the funnel. Don’t expect it to explain user behavior on its own.

Behavior tools

Hotjar, Lucky Orange, and similar tools help teams watch interaction patterns. Heatmaps can reveal ignored content, misplaced calls to action, and dead-click zones. Session recordings expose friction that aggregate reports can’t show.

These tools are where many teams discover that the problem wasn’t low intent at all. It was confusion.

Experimentation tools

Optimizely, VWO, and other testing platforms let teams run controlled experiments and compare variants. The value here isn’t the software by itself. It’s the ability to validate a hypothesis with discipline.

A testing platform without a research process usually creates decorative experimentation.

Feedback tools

Survicate, Typeform, on-site surveys, chat logs, and post-conversion questionnaires give context you won’t get from clicks alone.

This matters most when users hesitate for reasons analytics can’t see, such as trust concerns, pricing confusion, missing use-case information, or procurement friction.

A healthy CRO stack doesn’t just measure actions. It captures intent, friction, and outcome in the same system.

What leadership teams often miss

Executives sometimes ask for a single conversion number and a list of tests. That’s not enough.

A credible CRO program also needs:

- Clean event definitions: Teams need to agree on what counts as a conversion.

- Stable attribution logic: Especially when platform reporting is unreliable.

- Segmentation discipline: Mobile and desktop users rarely behave the same way.

- Readout standards: Every test should document hypothesis, setup, result, and business implication.

Without those controls, the organization ends up with activity instead of insight.

Common CRO Experiments and High-Impact Examples

The best CRO experiments aren’t “creative.” They’re grounded in observed friction.

When teams ask what to test first, the right answer is usually not a universal best practice. It’s the highest-friction point on the pages that influence revenue or pipeline. Still, some experiment types consistently earn attention because they target common decision barriers.

Landing page experiments

A strong landing page usually succeeds or fails on message match, clarity, and trust.

One of the most impactful tests is CTA personalization. Personalized CTAs outperform standard ones by 202%, based on HubSpot’s analysis of 330,000+ CTAs, which is why segmentation and intent matching deserve more attention than cosmetic changes (Matomo conversion rate optimisation statistics).

That result points to a practical hypothesis model:

- Change: Tailor the CTA to traffic source or audience segment.

- Expected result: More visitors continue to the next step.

- Reason: The page feels more relevant to the intent that brought them there.

Other landing page experiments often include:

- Headline refinement: Clarify the offer faster.

- Proof placement: Move testimonials, logos, or reviews closer to the decision point.

- Page structure changes: Reduce clutter so the next step becomes obvious.

Form and lead capture tests

Forms fail when they ask for too much, too early, or without enough confidence-building context.

Common hypotheses here include:

- Remove unnecessary fields: Expect higher completion because friction drops.

- Improve field order: Expect fewer abandonments because the form feels easier to start.

- Add supporting proof near submission: Expect stronger completion because perceived risk falls.

In SaaS and B2B, this often matters more than changing the button itself. If the visitor doesn’t trust the value exchange, the CTA wording won’t save the form.

For an applied example of this kind of work in a SaaS context, this results page shows how trial signup optimization can be framed around funnel behavior rather than isolated page edits: https://ezcaa.com/results/saas-trial-signup-optimization

Product, pricing, and checkout experiments

These pages sit close to revenue, so they deserve disproportionate attention.

On product and pricing pages, strong tests often focus on:

- Social proof depth: Reviews and ratings reduce uncertainty.

- Pricing clarity: Buyers leave when costs or package differences feel ambiguous.

- Mobile usability: A smooth desktop journey can still collapse on smaller screens.

- Checkout simplification: Remove steps, reduce distractions, and make progress visible.

The highest-impact CRO tests usually address hesitation, not aesthetics.

Urgency can help too, but only when it’s credible. Fake scarcity is easy to spot and usually harms trust over time.

What usually doesn’t work

Some experiments consume a lot of energy and produce little value:

- Tiny visual tweaks without research: Teams overestimate their impact.

- Testing low-traffic pages first: Even good ideas won’t matter much there.

- Running tests without a segmentation view: Mobile and desktop often need different fixes.

- Declaring wins too early: Short-term variation gets mistaken for improvement.

Good experimentation is less about volume and more about relevance. The strongest pipelines of tests come from understanding user behavior at each stage of the funnel, then choosing the changes most likely to reduce friction there.

Choosing Your Path In-House vs Agency CRO Teams

Once a company decides CRO should be a system, not an ad hoc task, the next question is resourcing.

Some companies build in-house. Some hire an agency. Others need something in between because they want specialized execution without building a full team from scratch.

Decision criteria

This isn’t only about cost. It’s about speed, operating maturity, and how much cross-functional coordination the company can support.

An in-house hire may understand the product well but still need analytics support, design resources, engineering time, and testing infrastructure. A traditional agency may bring process and tools but operate too far from commercial realities if the engagement is generic.

A more useful comparison looks like this.

In-house team strengths and limits

An internal team works well when the company already has:

- Consistent traffic volume: Enough data to support ongoing experimentation

- Embedded support: Design, engineering, analytics, and product access

- Leadership patience: CRO compounds over time, but it still needs operating discipline

- Documentation habits: Learnings need to survive personnel changes

The trade-off is that many internal teams become dependent on whatever other departments can spare. CRO work gets deprioritized because roadmap work or campaign launches always feel more urgent.

Traditional agencies and where they fit

An agency can bring structure quickly. That’s useful when the business needs outside perspective, tool fluency, and testing experience without a long hiring cycle.

The downside is variation. Some agencies deliver disciplined experimentation. Others sell audits, dashboards, and generic recommendations that never become an operating loop.

This is also where the AI layer is becoming more relevant. Emerging AI-powered CRO tools, including virtual try-on for e-commerce and smart live chat, are becoming more practical for optimization programs, especially when paired with human strategy rather than used as standalone gimmicks (Nebulab on ecommerce virtual try-on).

The hybrid model many companies need

For many SaaS, e-commerce, and B2B teams, the best answer isn’t pure in-house or pure outsourced execution.

They need a model that gives them:

- A fixed sprint cadence

- Cross-functional specialists

- Fast research and implementation

- Clear readouts tied to commercial outcomes

- Flexibility to shift focus as evidence changes

That’s where a squad model can make sense. One option is Ezca Agency, which works in focused 90-day sprints across channels including CRO, paid media, SEO, email, and content. The practical value of that setup is not that it “does marketing.” It’s that it creates a repeatable cycle for identifying friction, testing changes, and reallocating effort toward the highest-return opportunities.

For leaders weighing internal build versus external support, this broader comparison can help frame the decision in operational terms: https://ezcaa.com/blog/in-house-vs-agency-marketing

The wrong resourcing model doesn’t just slow testing. It breaks the feedback loop that makes CRO useful.

Making CRO Your Competitive Advantage in 2026

The companies that win with CRO don’t treat it as a website project.

They treat it as a management system for improving how demand becomes revenue. That shift matters more now because traffic is expensive, attribution is imperfect, and executive teams can’t afford leaky funnels disguised as growth.

What is conversion rate optimization, then, in the form that helps a business? It’s a disciplined process for turning existing attention into more purchases, better pipeline, and stronger acquisition economics.

The practical first steps are straightforward:

- Audit tracking: Make sure macro and micro conversions are defined correctly.

- Map the funnel: Identify the pages and steps most tied to revenue or qualified pipeline.

- Find one major friction point: Use analytics, behavior tools, and customer feedback together.

- Run a focused test cycle: Prioritize changes with commercial relevance, not cosmetic appeal.

- Review results against business health: Don’t stop at the conversion rate headline.

The advantage compounds when this becomes routine. Teams get faster at spotting friction. Testing gets sharper. Paid and organic acquisition become more valuable because the site converts intent more efficiently.

That’s why the strongest CRO programs are built in sprints, not in isolated experiments. A sprint creates momentum, accountability, and enough focus to turn insight into measurable action.

If your team wants a more systematic CRO program, Ezca Agency helps SaaS, e-commerce, and B2B companies run focused 90-day growth sprints that connect research, experimentation, and channel execution to measurable business outcomes.