A Guide to AB Testing SEO Without Ruining Your Rankings

Unlock growth with our complete guide to AB testing SEO. Learn how to design, run, and analyze tests that boost traffic and revenue, without Google penalties.

As a marketing leader or business owner, gut feelings aren't a sustainable growth strategy. A/B testing for SEO ends subjective debates, replacing them with a data-driven process that proves what actually moves the needle on rankings, traffic, and—most importantly—revenue. This is how you turn SEO from a mysterious cost center into a predictable profit driver.

Stop Guessing, Start Testing: The Real Impact of A/B Testing in SEO

For too long, SEO has been treated like a dark art, where success hinges on appeasing the ever-changing "Google algorithm." This leads to marketing meetings bogged down by arguments over which headline feels better or whether one more keyword will make a difference. This guesswork isn't just inefficient; it's expensive. Every decision made without data is a gamble with your marketing budget.

A/B testing SEO applies the scientific method to your optimization. Instead of rolling out a change across your entire site and hoping for the best, you run a controlled experiment. You apply the change to a portion of your pages (the variant) and leave the rest untouched (the control). This is the only way to isolate the impact of that one change and measure its direct effect on your business goals.

From Theory to Tangible ROI

Imagine your e-commerce team believes adding "Free Shipping" to product page titles will boost clicks from search results. Instead of a risky, site-wide update, you test it on 50% of your product pages.

After running the test for four weeks, the data comes in. The variant pages with the new titles show:

- A 12% increase in organic click-through rate (CTR).

- A 7% lift in organic sessions.

- A 4% increase in revenue attributed directly to organic traffic.

You now have an undeniable business case. You've proven a small copy change directly generated more traffic and sales. This is the power of moving from subjective opinions to validated results. It's no longer about what you think Google wants; it’s about what you can prove your audience and the search engine respond to.

Of course, to measure this impact accurately, you need a solid foundation. It's essential to understand what SERP tracking is and its strategic importance, as it’s the bedrock of any serious SEO testing program.

Key Takeaway: SEO A/B testing turns optimization from an art of intuition into a science of evidence. It lets you de-risk big changes, prove ROI, and build a compounding advantage over competitors who are still just guessing.

This data-first approach does more than improve metrics; it silences internal debates. When you can definitively show that Test A drove a 15% lift in qualified leads while Test B fell flat, allocating resources becomes a straightforward business decision, not a political battle.

Embracing this discipline requires a strategic choice—manage it yourself or bring in experts, a classic in-house vs. agency marketing dilemma. Either way, building a culture of testing is how you create a predictable, repeatable engine for organic growth.

Designing SEO Experiments That Deliver Actionable Insights

A poorly designed test is worse than no test at all. It’s a surefire way to burn budget, chase phantom gains, and lead your strategy down the wrong path. Effective A/B testing for SEO doesn't start with a fancy tool; it begins with a rigorous design process.

This framework separates a one-off lucky guess from a reliable system that generates actionable insights and drives real business growth.

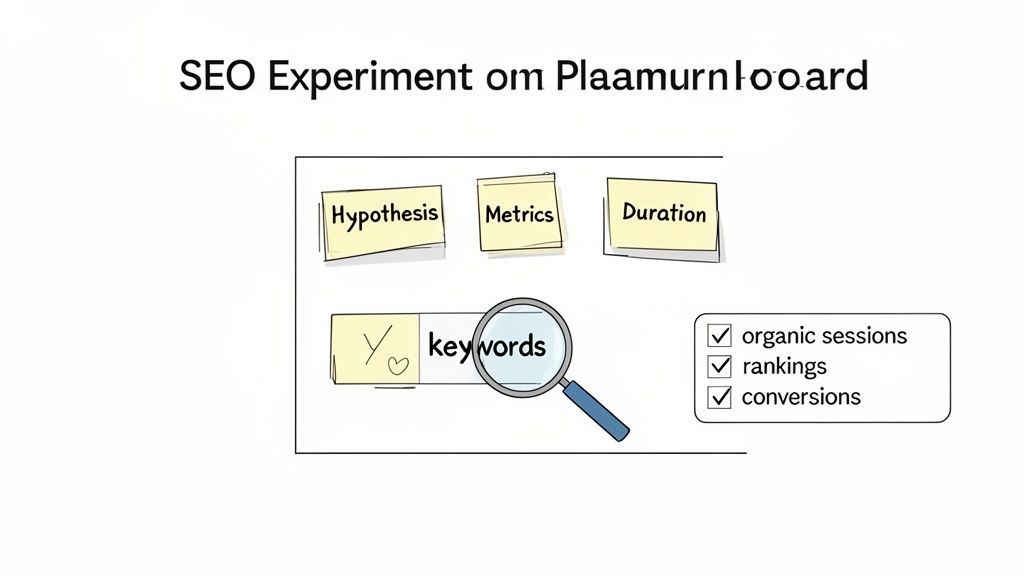

At the heart of any valid experiment is a strong, testable hypothesis. A vague goal like "improving our pages will increase traffic" is useless for testing. A powerful hypothesis is specific, measurable, and built on data or a clear strategic assumption.

Formulating a Powerful Hypothesis

Your best test ideas come from digging into user behavior and keyword data. Look for gaps, patterns, and opportunities. Are users bouncing off certain pages? Are competitors winning high-intent keywords like "vs" or "alternative"? These observations are gold.

Use them to build a clear "If we do X, then we expect Y to happen" statement.

Here are a few examples of strong, business-focused hypotheses:

- For an e-commerce site: "If we add a 'Key Specs' summary box above the fold on our product pages, then we will increase average time on page and reduce bounce rate, leading to improved rankings for core product terms."

- For a B2B SaaS company: "If we add a 'vs' comparison table targeting competitor keywords to our feature pages, then we will capture more long-tail traffic and increase demo sign-ups from organic search."

- For a content-heavy site: "If we add audio versions of our top blog posts, then we will increase user engagement signals (like dwell time) and see a lift in organic sessions as Google rewards the enhanced media."

Each is specific. It defines the change and predicts a measurable outcome impacting SEO performance.

Choosing Metrics That Matter

Vanity metrics can kill a testing program. An increase in impressions is meaningless if it doesn't result in more clicks or, more importantly, conversions. Focus on metrics that reflect true SEO impact and connect directly to revenue.

Your primary metrics must include these non-negotiables:

- Organic Sessions or Clicks: The most direct measure of traffic change.

- Keyword Rankings: Are you moving up for the terms your test is targeting?

- Organic CTR: Crucial for tests involving page titles or meta descriptions.

- Goal Completions: Tracking leads, sales, or sign-ups from your organic audience is everything. This is where the ROI is.

Secondary metrics provide context, helping you understand why you're seeing the results. These are often user behavior signals like bounce rate, time on page, and pages per session. Often, a lift in these behavioral metrics precedes a rankings climb.

By focusing on bottom-line metrics like organic conversions and revenue per visitor, you shift the conversation from "did traffic go up?" to "how much business value did this test generate?"

This is a core principle at Ezca. Our performance squads are held accountable for driving measurable business outcomes, not just traffic stats. We design every experiment with clear conversion goals from the start, ensuring our efforts are always tied directly to your bottom line.

Ensuring Statistical Significance and Test Duration

You see a 10% lift in traffic. Is that a real win or random noise? The answer lies in statistical significance. This tells you how confident you can be that your results weren't a fluke. The industry standard to aim for is a 95% confidence level.

To get there, you need a large enough audience (sample size) and enough time.

- Sample Size: High-traffic pages might hit significance in a couple of weeks. Lower-traffic pages might need to run for over a month to gather enough data for a reliable conclusion.

- Test Duration: SEO tests have a unique wrinkle—you must run them long enough for Googlebot to crawl, render, and index both your original and variant pages multiple times. A minimum of four weeks is a safe bet for most experiments.

Ending a test too early is a common and costly mistake. You risk making a huge decision based on a short-term fluctuation or incomplete data. A properly designed experiment has a pre-defined duration and sample size target, creating a repeatable process for perpetual optimization.

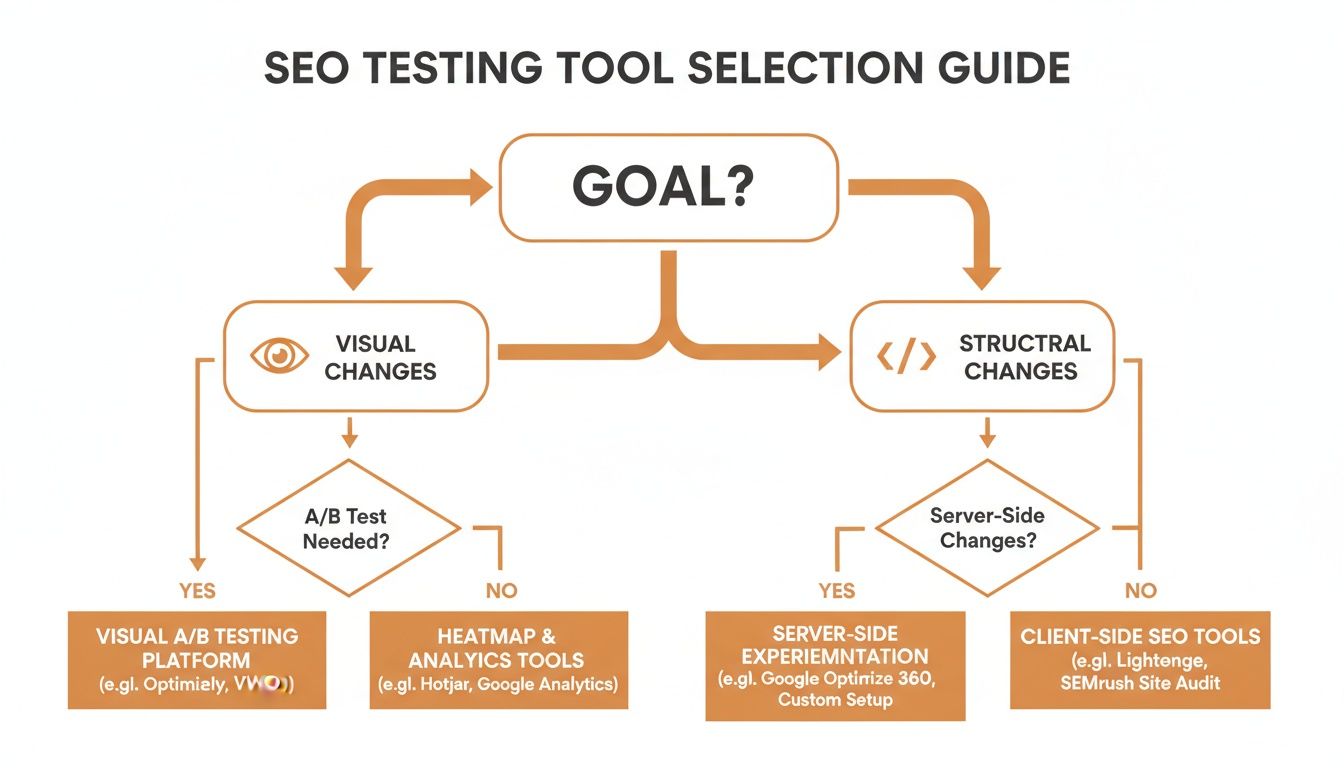

Choosing Your SEO Testing Method: The Strategic Decision

Choosing the right technical setup for your AB testing SEO program is a foundational decision. Get it right, and you’re on the path to reliable data and meaningful growth. Get it wrong, and you could waste time, burn developer resources, and even hurt your site's performance.

This isn't just a technical choice; it's a strategic one. Your method determines your budget, speed, and the types of tests you can run. Let’s walk through the three main ways to set up SEO experiments: client-side, server-side, and proxy-level testing.

Client-Side Testing: The Quick Start

Client-side testing is often the first taste of experimentation. It uses JavaScript to modify a page inside the user's browser. Tools like the old Google Optimize, VWO, or Optimizely work this way.

The appeal is obvious: it’s fast and simple. A marketing manager can often run a test—changing a headline or swapping an image—without pulling in a developer.

But for serious AB testing SEO, the drawbacks are significant.

- Performance Hits: The JavaScript can slow your site down and cause a "flicker," where visitors see the original page for a moment before the test version loads—a jarring user experience.

- Blind to Googlebot: Since changes happen in the browser, Googlebot may not execute the JavaScript and "see" your variant. This makes the setup unreliable for testing anything that matters for SEO, like internal linking or schema markup.

Client-side is fine for small visual tweaks, but it's not robust enough for changes expected to directly impact organic rankings.

Server-Side Testing: The Robust Engine

This is where serious players operate. With server-side testing, the logic happens on your web server before the page is sent to the browser. Your server decides whether to serve the original page (A) or the variant (B).

The result is a clean, flicker-free experience for both users and search engine bots. It's the gold standard for testing significant changes you need Google to evaluate properly.

Key Insight: Server-side testing guarantees that what your users see is exactly what Googlebot sees. For any test involving structural HTML, canonical tags, or major content changes, this is the only way to get truly accurate SEO data.

The catch is the heavy lifting required. Implementing a server-side test means your development team has to build the A/B logic into your site’s backend, consuming significant engineering resources.

Proxy-Level Testing: The Best of Both Worlds

Proxy-level testing offers the power of a server-side setup with a fraction of the internal effort. It’s a clever middle ground, used by tools like SplitSignal.

A proxy server sits between your website and the internet. It intercepts traffic, makes changes to the HTML on the fly, and then delivers the final page to the user or Googlebot.

This gives business leaders major wins:

- Minimal Developer Time: Your engineers don't have to write test code. The proxy handles everything, freeing them to work on the core product.

- Perfect for SEO: Because the HTML is modified before delivery, Googlebot crawls the final, finished version. This makes it ideal for any ab testing seo you can dream up.

- Fast and Clean: You get all the benefits of a server-side test—no flickering, no performance lag—without the implementation headache.

The main consideration is routing traffic through a third-party service, which requires a DNS change. But for companies that want to scale SEO testing without derailing development sprints, it's often the smartest way forward. This is why we use a proxy-based approach at Ezca; it lets us deploy sophisticated SEO experiments quickly for clients, getting them reliable data without bogging down their internal teams.

Comparison of SEO A/B Testing Methods

Choosing the right technical setup is about balancing resources, speed, and data accuracy. Here's a quick breakdown of how these three methods stack up.

While client-side is a tempting entry point, any serious SEO testing program will quickly outgrow it and benefit from the reliability of a server-side or proxy solution.

How to Run SEO Tests Safely and Effectively

Running an ambitious SEO test can feel like you're one wrong move away from disaster. The fear is real: could this test accidentally sabotage the very rankings you’ve worked so hard to build?

With a disciplined process, you can run powerful experiments that deliver clear insights without putting your site at risk. It’s all about turning that risk into a calculated, strategic advantage.

For a great overview of the fundamentals, Will Critchlow from SearchPilot breaks it down here:

The biggest worry I hear from marketing leaders is about triggering penalties for duplicate content or cloaking. These are legitimate but avoidable concerns. Google isn’t against testing; they’ve given us clear guidelines on how to do it right. The secret is to be upfront with Googlebot about what you're doing.

Keeping Google Happy: Canonicalization and Cloaking

When you run a split test with multiple URLs (like /page-a vs. /page-b), you're temporarily creating duplicate content. To keep this from confusing Google, you need to tell search engines which version is the "real" one.

The rel="canonical" tag is your best friend. Your test variation must have a canonical tag pointing back to the original control page. It's like a note for Google saying, "This is just an experiment. Please send all ranking signals to the original URL."

For split URL tests, also use a 302 temporary redirect to send traffic to the variant. A 302 tells search engines this is a temporary detour. Using a 301 permanent redirect would be a disaster, as it would pass all link equity to the variant and end your test prematurely.

Cloaking means showing different content to users than you show to Googlebot. It can be an accidental side effect of client-side testing if the JavaScript fails to execute for the crawler. This is why server-side or proxy testing is safer for ab testing seo—it guarantees bots and users see the exact same HTML.

If you're trying to figure out which testing tool is right for your goal, this flowchart can help you decide.

For any deep, structural changes impacting SEO, you need a method that modifies the HTML before it reaches the browser, making proxy or server-side solutions the clear winner.

The Test Lifecycle: From Launch to Winner

An SEO test that runs forever isn't a test—it's a technical liability. Every experiment needs a clear beginning, middle, and end. A common mistake is ending a test too early, before Googlebot has fully processed the changes.

As a rule of thumb, plan for your test to run for a minimum of four weeks. This gives you enough runway for:

- Sufficient Data Collection: You need enough traffic to reach statistical significance, typically a 95% confidence level.

- Googlebot Crawls: You have to give the crawler time to visit both your control and variant pages multiple times to index the changes and measure user interactions.

Once you have a clear winner, act on it immediately. Remove all testing code and implement the winning changes permanently on the original URL.

If you ran a split test with a 302 redirect, switch it to a 301 redirect, pointing the losing URL to the winner. This consolidates all authority and ensures you get the full, long-term benefit of your findings. Managing these technical details separates a good idea from a lasting SEO gain.

If you're dealing with complex user behavior goals alongside your SEO tests, explore what a dedicated conversion rate optimization service can offer.

From Data to Dollars: Analyzing Results and Proving ROI

Your test has finished. Now the real work begins. Getting an experiment live is one thing; turning that data into a clear business case is another. This is the moment you connect SEO efforts directly to dollars and cents, proving the value of your work and getting buy-in for the next test.

Before you jump into comparisons, you must isolate your signal from the noise. Your analytics platform tracks everything, but for an SEO test, only organic traffic matters. Create a dedicated segment in analytics to filter for organic traffic only. This critical step ensures a sudden paid social spike doesn’t accidentally skew your results and lead to the wrong conclusion.

With clean, organic-only data, you can compare the control against the variant. But don't just stop at "Did we get more traffic?" Dig into the metrics the C-suite actually cares about.

Measuring Bottom-Line Impact

A great analysis zeroes in on financial performance. You need to confidently answer, "How did this test impact our bottom line?" This means tracking the entire customer journey for both control and variant user groups.

Here are the business metrics you should be laser-focused on:

- Organic Conversion Rate: Did the variant get more people to sign up for a demo, make a purchase, or fill out a lead form? A 5% lift in conversions is almost always more valuable than a 10% lift in raw traffic.

- Revenue Per Organic Visitor: For e-commerce, this is the gold standard. Tally the total revenue from each group and divide it by the number of organic visitors. This tells you which version makes more money.

- Lead Quality: In B2B, not all leads are equal. Sync with your sales team to score leads from each test group. I’ve seen tests generate fewer leads but win because those leads were much higher quality and more likely to close.

By focusing on revenue and lead quality, you shift the conversation from SEO tactics to business growth. A report showing a $15,000 increase in monthly recurring revenue from a title tag test is infinitely more powerful than one showing a simple CTR bump.

Once tests are complete, the next step is connecting results to financial outcomes, understanding how to apply methods for measuring marketing effectiveness and proving ROI. This transforms your SEO program from a cost center into a documented profit engine.

Communicating Results to Stakeholders

How you share findings is as important as the data itself. Your leadership team doesn’t have time to wade through a messy spreadsheet. Your job is to tell a clear, concise story connecting a specific action to a tangible business result.

A simple reporting structure works best:

- The Hypothesis: What was the "if-then" statement you were testing?

- The Punchline: A one-sentence summary of the outcome (e.g., "The new headline increased qualified MQLs by 15% with 97% statistical confidence.").

- The Core Metrics: A small, easy-to-read table showing the change in organic sessions, conversion rate, and revenue or lead value.

- The Business Impact: The bottom-line projection. What's the estimated annual revenue increase if the winner is rolled out site-wide?

- The Recommendation: A clear, unambiguous call to action, like "Roll out the winning variant to all product pages."

This test-analyze-scale loop is the engine of any high-performance growth strategy. It's the core principle behind Ezca's 90-day sprints, where our performance squads continuously run experiments to find what works and then double down on it. We use this exact process to dynamically shift resources toward the changes that drive the most revenue, ensuring our clients see continuous gains. If you want to see more on this, check out our guide on how to increase organic traffic with strategic, data-backed initiatives.

Your A/B Testing SEO Questions, Answered

If you're thinking about SEO testing, you've probably got a few big questions. It's smart to be cautious—there are real risks if you get it wrong, but the rewards are huge when you get it right.

Let's walk through the most common concerns we hear from teams before they dive in, so you can move forward with a clear, data-backed strategy.

How Long Should I Run an SEO A/B Test?

There’s no single magic number. The right duration comes down to two things: gathering enough data to trust your results and giving Googlebot enough time to see the changes.

First, you need statistical significance. Your test pages' traffic volume is the biggest factor. A popular e-commerce product page might reach a 95% confidence level in just two weeks. A lower-traffic B2B blog post could easily need six weeks or more to collect enough data.

At the same time, you have to factor in Google's crawl cycles. If you run a test for only a week, Google might not have even fully indexed your variant page, making the results unreliable.

As a solid rule of thumb, plan for your tests to run for a minimum of four weeks. This window usually gives crawlers enough time to visit your control and variant pages multiple times and allows most pages to gather a statistically relevant amount of data.

Trying to speed this up is one of the costliest mistakes you can make. You'll end up making decisions based on random noise instead of real performance changes.

Can A/B Testing SEO Hurt My Rankings?

Yes, it absolutely can—but only if it's done wrong. When SEO tests go south, it's almost always because of two avoidable technical mistakes: cloaking and duplicate content. The good news is that with the right setup, these risks are easy to manage.

- Cloaking: This is when you accidentally show different content to users than you show to Googlebot. It’s a bigger risk with client-side testing, where the crawler might not execute the JavaScript properly.

- Duplicate Content: This pops up in split URL tests. If you create a variant page on a new URL but don't tell Google which page is the "real" one, you can split your authority and dilute your ranking power.

The fix is simple: follow Google’s own guidelines. Always use a rel="canonical" tag on your variant page that points back to the original control page. This tag tells Google, "This is just a temporary test. Please credit all ranking signals to the original URL." For split tests, use a 302 temporary redirect, not a 301 permanent one.

Following these steps turns a potential disaster into a simple technical checklist. Working with someone who’s done this hundreds of times ensures these details are handled perfectly, protecting your hard-earned rankings.

What's the Difference Between A/B Testing and Split Testing?

People often use these terms interchangeably, but for SEO, the difference is huge. It all comes down to how the test is technically set up.

An A/B test happens on a single URL. A script changes elements on the page for a portion of your audience. For instance, your-site.com/feature might show one headline to 50% of visitors and a different one to the other 50%, all on the same URL. This works well for small changes like button text or hero images.

A split test (or split URL test) is different. You create two separate versions of a page on two different URLs (like site.com/page-a vs. site.com/page-b). You then redirect a percentage of traffic from the original URL to the new variant URL.

For any significant SEO change—like overhauling page structure, adding content sections, or changing internal linking—split testing is almost always the way to go. It gives Googlebot two distinct HTML pages to crawl and evaluate, providing a much cleaner, more reliable signal about how search engines will react.

Ready to move from guesswork to a data-driven growth engine? The performance marketing squads at Ezca specialize in designing and executing flawless SEO experiments that are tied directly to business results. We handle the technical complexities so you can focus on the ROI. Learn how we can build your testing program.