Master b to b market research: Drive Growth & Strategy

Master b to b market research. Discover methodologies, tools & turn insights into measurable SEO, paid media, & GTM strategy.

A new CMO usually inherits the same problem. The dashboard is full of activity, the pipeline story is fuzzy, and every team has a different opinion about why deals move or stall.

Product says buyers care about features. Sales says procurement is the blocker. Paid media says the market is saturated. SEO says the message is off. Everyone has a theory. Very few teams have b to b market research disciplined enough to tell them which theory deserves budget.

That gap gets expensive fast. It shows up as campaigns built on internal language, landing pages written for the wrong stakeholder, and channel spend that keeps running even when the signal is weak. In B2B, bad assumptions do not just waste clicks. They drag out sales cycles, confuse buying committees, and create friction between marketing and sales.

Research is the correction layer. Not a branding exercise. Not a slide deck for the board. A way to decide what to say, who to say it to, where to spend, and what to stop doing.

Why B2B Market Research Is No Longer Optional

A common failure pattern looks like this. A company launches a new offer after a few internal workshops and a competitor scan. The page goes live. Sales decks are refreshed. Paid campaigns start. Then the market responds with silence.

The issue is rarely effort. It is usually mismatch. The company used its own vocabulary instead of the buyer’s. It aimed messaging at one champion while finance, operations, and technical evaluators were shaping the decision. It assumed the problem was obvious when buyers were still defining it.

That is why b to b market research is now a risk-control function. It helps a team test demand before rolling out new positioning. It exposes which objections deserve content and which are just noise from a few loud calls. It also gives marketing a better basis for budget decisions than instinct.

The upside is too large to ignore. The global B2B eCommerce market is projected to reach $36.16 trillion by 2026, with a 14.5% CAGR according to SellersCommerce B2B marketing statistics. In a market moving at that scale, the teams that understand buyer intent earlier have a clear advantage.

Research also changes the quality of execution. Instead of asking, “How do we get more traffic?” the better question becomes, “Which buyer problem creates the highest-value demand, and which channel captures it most efficiently?” That is the difference between activity and growth.

For leaders building or refining a B2B marketing strategy, research should sit upstream of campaign planning. It is what keeps launches grounded in market reality instead of internal optimism.

Key takeaway: In B2B, research is not overhead. It is what prevents expensive messaging mistakes before they spread across paid, SEO, sales enablement, and product marketing.

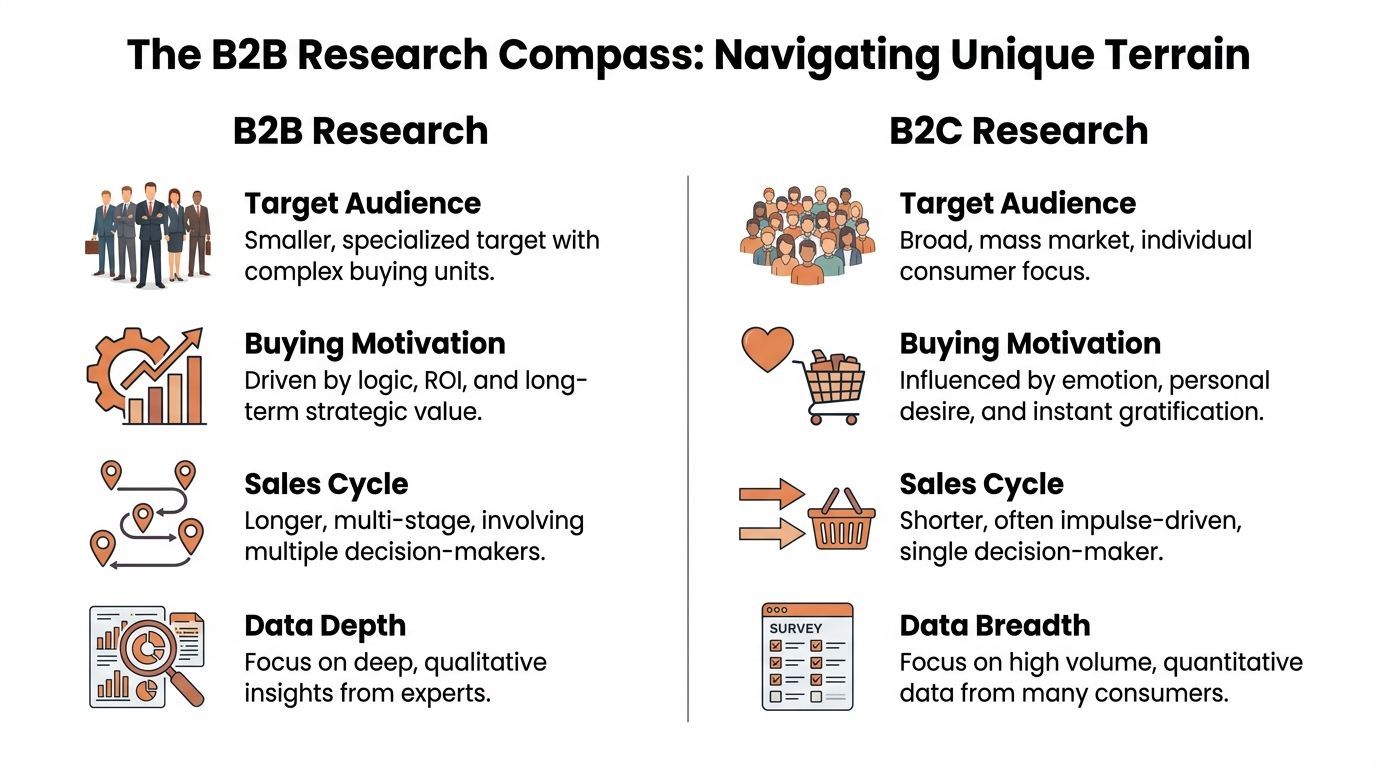

The B2B Research Compass Navigating Different Terrain

B2C research can often work like a street map. The route is visible, the buyer is usually one person, and the decision can happen quickly.

B2B research is closer to using a compass in rough terrain. The path changes by account, the decision is shared, and the information needs are different at each stage.

Why B2B research gets harder

The first difference is the buying committee. In enterprise settings, successful market intelligence requires mapping 6 to 10 stakeholders, and that work uncovers 70% of hidden pain points missed by title-based targeting, according to Valona’s guide to B2B market intelligence. If your research only speaks to a single decision-maker title, you are probably hearing a partial truth.

The second difference is time. B2B teams deal with longer sales cycles, internal approvals, security reviews, budget signoff, and competing priorities. A buyer can agree with your message and still not buy because another stakeholder sees risk you did not address.

The third difference is decision criteria. B2C often allows more emotional and immediate buying triggers. B2B buyers still respond to strong narrative, but they need proof, fit, and operational confidence. Your research has to reveal how buyers define ROI, what they fear internally, and which claims they trust.

A side-by-side view

That difference is why consumer-style tactics often fail in B2B. A catchy ad can generate attention, but it will not move a deal if the website ignores implementation questions, integrations, or procurement concerns. Likewise, a broad survey can produce clean charts while missing key blockers inside an account.

What experienced teams do differently

Strong B2B teams research the account ecosystem, not just the lead. They ask:

- Who starts the evaluation: The person searching may not control budget.

- Who can stall the deal: Legal, IT, operations, or finance often shape the outcome.

- What each role needs: One buyer wants speed, another wants compliance, another wants adoption.

- Where language breaks down: The words that win click-through are not always the words that survive a sales call.

A practical rule helps here. If your findings can only improve top-of-funnel messaging, your research is too shallow for B2B. Good research should also influence objections handling, proof points, onboarding content, and account prioritization.

Choosing Your Research Methodology

The wrong method creates false confidence. A team runs a survey when it needed interviews, or conducts a few calls when it needed harder measurement. The result is the same. Decisions get made on incomplete evidence.

Method choice should start with the decision you need to make. If the issue is unclear messaging, hidden objections, or why deals stall, start qualitative. If the issue is market sizing, preference validation, or performance benchmarking, use quantitative. Most serious B2B teams need both.

Qualitative vs quantitative research at a glance

Use qualitative when the problem is still fuzzy

Qualitative work is where you learn what buyers mean, not just what they select. This approach is usually the fastest route to finding core friction in a category or offer.

Use interviews when you need to understand:

- Why deals go dark: Ask buyers what happened between first interest and internal review.

- Why messaging misses: Learn the exact language buyers use to describe the problem.

- Why competitors win: Surface trust factors, perceived risk, and implementation concerns.

- Why segments behave differently: Compare what matters to different industries or company types.

A strong interview guide is short. The goal is depth, not interrogation.

A simple interview template

- Walk me through the moment your team started looking for a solution like this.

- What made the problem urgent enough to act on?

- Who else got involved, and what did each person care about most?

- What concerns nearly stopped the purchase or delayed it?

- What language or proof made one option feel safer than another?

Those questions usually produce better signal than asking whether someone “liked” a concept. Buyers are better at describing their process than predicting their future behavior.

Use quantitative when you need confidence at scale

Quantitative work is useful once you know what you want to measure. It can validate whether a pain point is widespread, compare segments, or benchmark perceptions across an audience.

For surveys, scale matters. A sample size above 300 respondents is typically required for statistical significance at a 95% confidence level, and AI-enhanced survey platforms can boost engagement by 20% to 30%, according to Sample Solutions on B2B market research methodologies.

If your team is designing a survey and wants a practical overview of formats, question types, and trade-offs, this guide to quantitative data collection methods is a useful reference.

A simple three-question NPS-style survey

- How likely are you to recommend us to a colleague?

- What is the main reason for that score?

- What is the one thing we should improve first?

That short format works because it gives you one directional metric, one explanation, and one priority. It also fits busy B2B respondents better than a long form.

Practical tip: Do not ask a survey to discover what interviews should have uncovered first. Surveys are for measurement. Interviews are for discovery.

The Method Decision Teams Often Miss

The trade-off is not qual versus quant. It is timing.

Start with interviews when:

- the market is shifting,

- the category is crowded,

- the offer is new,

- or the sales team keeps hearing objections that marketing cannot explain.

Start with surveys when:

- you already have hypotheses,

- leadership wants confidence before reallocating budget,

- or you need a clearer view across segments.

A useful sequence is simple. Interviews first. Survey second. Campaign changes third. Then measure the downstream effect in pipeline, not just response rates.

Finding and Recruiting Your Ideal B2B Audience

Much research does not fail because of bad questions. It fails because the wrong people answered them.

B2B teams often recruit by job title alone. That sounds efficient, but it strips out account context. In practice, a title match can still be the wrong participant if that person has little influence, limited visibility, or no direct involvement in the buying process.

Start with the committee, not the contact

Good recruitment starts by mapping who matters inside the account. That matters because B2B market intelligence requires mapping 6 to 10 stakeholders, and that broader view reveals pain points simple title-based targeting misses, as noted earlier in the Valona research.

That means your ideal research audience may include:

- Economic buyers who own budget or influence funding

- Functional owners who feel the operational problem

- Technical evaluators who check feasibility and risk

- Users or managers who care about adoption and workflow fit

If you only interview one of those groups, your findings skew toward one stage of the decision.

Three recruitment channels that work

Your CRM

This is usually the best place to start. Current customers, recently closed-won accounts, lost opportunities, and stalled deals all contain usable research audiences.

Pros:

- the participants are real,

- context is richer,

- and you can tie feedback back to revenue stages.

Cons:

- respondents may already know your brand too well,

- and internal teams can bias who gets invited.

Use CRM recruitment when you want insight tied to actual pipeline behavior.

LinkedIn Sales Navigator

This works well when you need non-customers or a narrow ICP. It is especially useful for exploring a new vertical, job function, or account tier.

Pros:

- precise filtering,

- fast list building,

- and strong fit for outreach-led interview recruiting.

Cons:

- response rates can be uneven,

- and title targeting alone is not enough.

Screen for buying involvement, not just seniority.

A short explainer can help your team think through outreach mechanics and qualification before launch:

Specialized B2B panels

Panels help when the audience is niche, hard to reach, or outside your current network.

Pros:

- faster access to qualified professionals,

- broader market coverage,

- and cleaner separation from your brand.

Cons:

- screening quality varies,

- and you still need a solid participant brief.

Use panels when speed matters and internal lists are too narrow.

Write recruitment copy that respects the respondent

Strong recruitment messages are direct. They tell people why they were selected, how long the conversation will take, what topic will be covered, and what value they get in return.

A useful outreach structure:

- State relevance: Why this person was chosen

- Define the topic: What you want to learn

- Set expectations: Time commitment and format

- Be clear on incentive: Offer something fair and professional

- Avoid sales language: This is research, not pipeline generation

Tip: If a recruitment email sounds like demand generation, serious B2B buyers will ignore it. The message should read like a focused request for expertise.

The Modern B2B Research Toolkit and Data Sources

Good b to b market research rarely lives in one platform. Strong teams build a stack around jobs to be done. One set of tools helps them understand the market. Another collects feedback. A third turns messy conversations into usable patterns.

The mistake is buying tools before defining the workflow. Start with the sequence of work. Then choose the smallest stack that supports it.

Competitive intelligence and secondary research

This layer tells you how the category presents itself before you speak to buyers.

Tools often used here include Gartner, Forrester, Semrush, Ahrefs, Similarweb, Crunchbase, and company review platforms. Each solves a different problem. Analyst research gives strategic framing. Search tools reveal query patterns and competitor visibility. Review sites expose what customers praise, complain about, or compare.

A practical workflow looks like this:

- Pull a competitor’s top non-branded keywords in Semrush.

- Review product pages and pricing pages for repeated claims.

- Compare that language with what customers say in reviews.

- Build an interview guide around the gaps.

That sequence keeps interviews grounded in observable market signals instead of opinion.

Surveys and structured quantitative collection

Once you know what you need to measure, tools like SurveyMonkey, Typeform, Qualtrics, or Google Forms can support structured collection. The platform matters less than the survey design, audience quality, and analysis discipline.

Use survey tools to validate themes, compare segments, or measure perception shifts after a repositioning effort. Keep them short. B2B respondents do not reward unnecessary length.

Qualitative analysis and feedback systems

Interviews generate the best insight, but only if the team can process them well. Zoom, Google Meet, Teams, Dovetail, UserTesting, and Notion can all help here.

A simple system works:

- Record calls with permission.

- Convert the conversation into clean text.

- Tag recurring themes by role, segment, and sales stage.

- Feed the patterns into messaging, content, and sales enablement.

If your team is still reviewing recordings manually, it is worth using a process for transcript audio to text so interviews become searchable and easier to synthesize.

Build a stack around decisions, not subscriptions

The best toolkit is often smaller than leaders expect.

| Job to be done | Typical tool types | Output | |---|---| | Understand category language | Search intelligence, analyst reports, review sites | Market framing and competitor claims | | Validate patterns at scale | Survey platforms | Directional measurement across segments | | Capture buyer nuance | Video calls, research repositories, notes systems | Objections, motivations, role-specific needs | | Share findings internally | Slides, docs, CRM notes, enablement hubs | Usable guidance for marketing and sales |

The test is simple. If a tool does not change a decision, remove it from the stack.

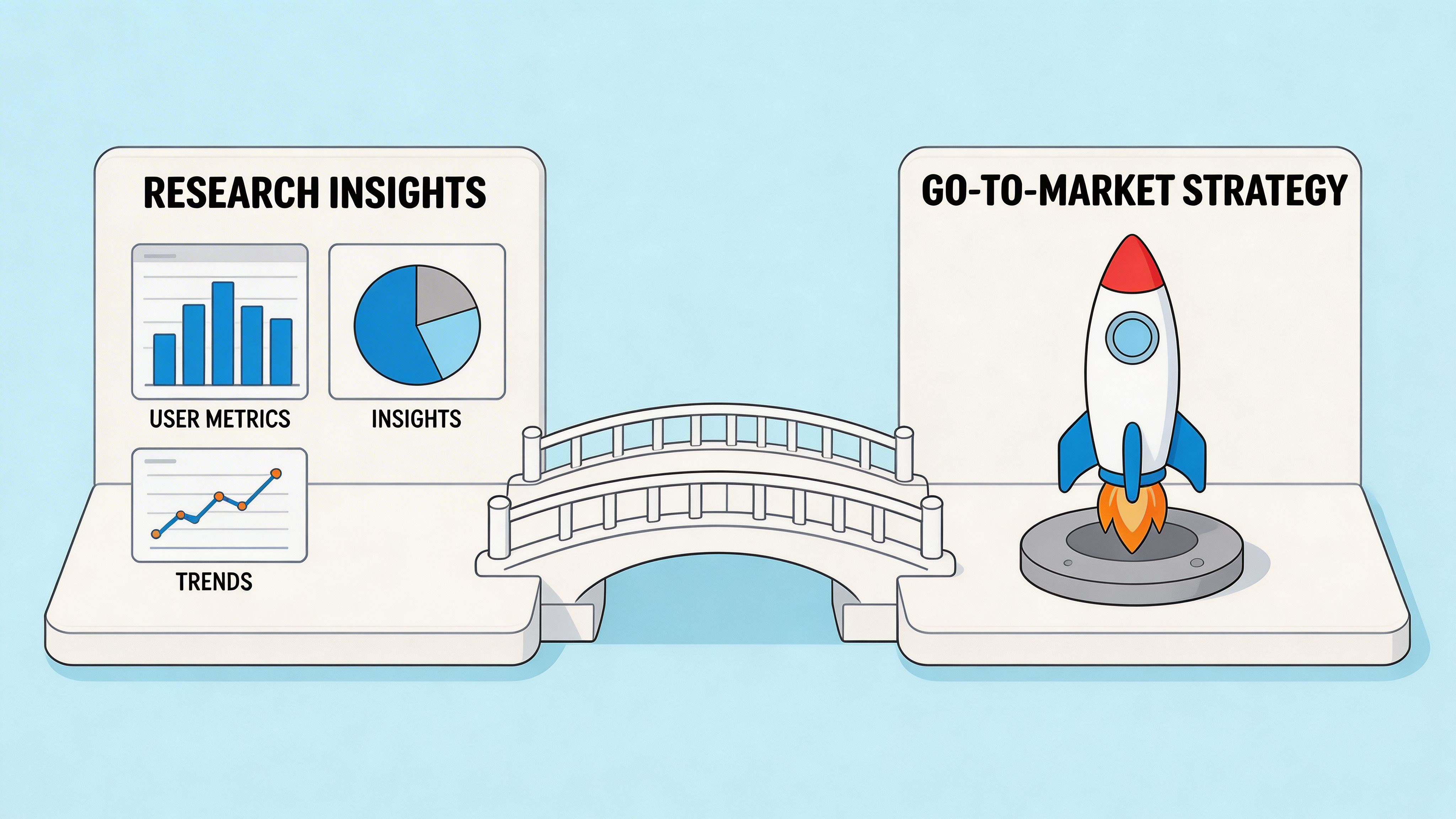

From Insights to Actionable Go-To-Market Strategy

Research has no value until it changes what your team does on Monday.

Many companies break down at this point. They complete interviews, summarize findings, share a deck, and move on without changing keyword targeting, ad copy, landing pages, or sales materials. The research is sound. The execution stays the same.

Start with search because buyers do

Organic search deserves special attention in B2B. B2B companies generate twice as much revenue from organic search as from other marketing channels, and a typical B2B buyer conducts about 12 Google searches before making a purchase decision, according to DBS Website’s B2B marketing statistics and trends.

That has a direct implication. If your research reveals the buyer’s language is different from your internal language, SEO should be one of the first places you act.

For example:

- If buyers say integration capabilities and your site keeps saying platform features, update page titles, H1s, supporting copy, and comparison pages.

- If technical evaluators care about implementation risk, create pages that answer setup, migration, and compatibility questions.

- If finance asks about total value, build content that frames business impact in plain language instead of feature lists.

Turn findings into campaign changes

The best way to operationalize insight is to translate each finding into one visible change.

If you learn that one stakeholder starts the search but another approves the budget

Do this:

- split campaigns by intent and audience,

- build separate landing pages,

- and adjust proof points by role.

Top-of-funnel pages may need problem recognition content. Bottom-of-funnel pages may need procurement, security, or ROI reassurance.

If interviews show buyers distrust broad claims

Do this:

- remove vague superlatives from ads,

- add specifics to landing pages,

- and equip sales with clear objection-handling language.

In B2B, precision builds trust faster than hype.

If the market uses a different category frame than your brand

Do this:

- rethink information architecture,

- revise paid search keyword groups,

- and create comparison pages that meet buyers where they are.

The market does not adapt to your taxonomy. Your go-to-market motion has to adapt to the market’s.

Practical rule: Every insight should map to at least one action in SEO, one action in paid or email, and one action in sales enablement. If it does not, the finding may be too vague to matter.

A simple insight-to-action grid

Research-driven account targeting also matters in account-based programs. If your team is refining segmentation and messaging by account tier, examples from B2B account-based marketing results can help frame how execution needs to align with research rather than generic persona work.

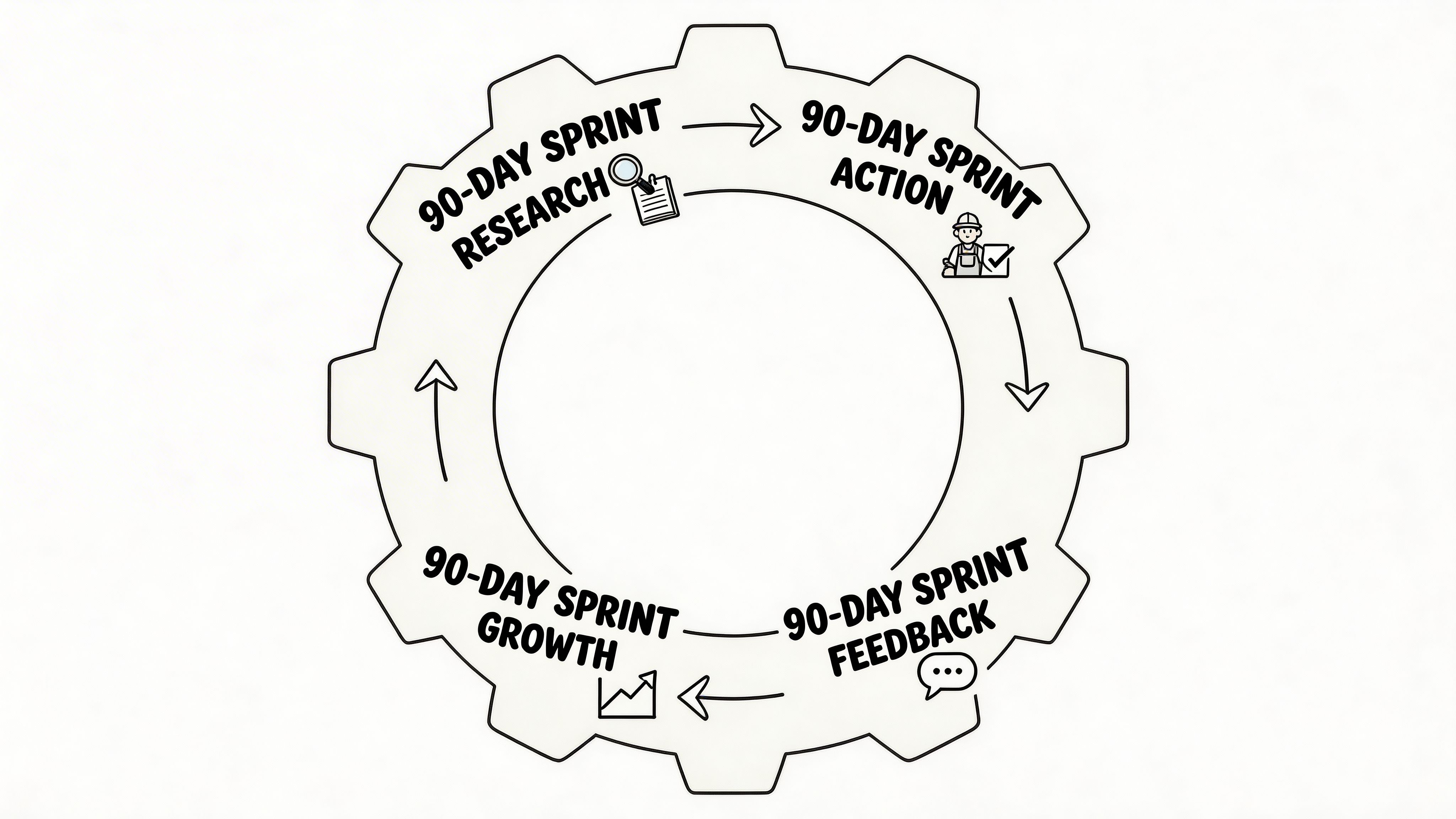

Operationalizing Research in 90-Day Sprints

Research usually fails in execution for one reason. Nobody owns the translation from insight to workflow.

Marketing gets the report. Sales hears a summary. Product sees a few notes. Then the quarter moves on. This is the operational gap many teams feel when segmentation work sounds smart in a workshop but never changes campaigns, handoff criteria, or budget decisions.

That gap is well documented. A Bain analysis highlighted in StrategyKiln’s discussion of B2B market segmentation notes that many businesses struggle to operationalize segmentation on a consistently profitable basis. This is exactly why a sprint model works. It forces teams to turn findings into actions inside a fixed window.

What a 90-day model solves

A sprint model is useful because it creates deadlines for three things that normally drift:

- Decision ownership

- Channel changes

- Measurement

Without that structure, research gets treated as insight generation. With it, research becomes a production input for pipeline growth.

A practical 90-day operating rhythm

Days 1 to 30

Focus on evidence gathering and prioritization.

This phase usually includes:

- customer and lost-deal interviews,

- review mining,

- keyword and competitor analysis,

- and segmentation by account type, need, or buying role.

The output should not be a giant report. It should be a short list of high-confidence hypotheses. Which message is wrong. Which page is under-serving evaluators. Which segment deserves more spend. Which channel should be deprioritized.

Days 31 to 60

Here, teams make visible changes.

Examples:

- rewrite core landing pages based on buyer language,

- launch revised paid search ad groups,

- adjust email sequences by role,

- equip sales with updated call tracks and collateral,

- and create support content around recurring objections.

The key is scope control. Pick the few changes most likely to affect qualified pipeline, not a broad rebrand.

Days 61 to 90

Now you measure what changed and decide what earns more budget.

Look at:

- lead quality by campaign and segment,

- sales feedback on message fit,

- conversion friction on priority pages,

- and whether the revised GTM motion attracts better-fit opportunities.

At this point, a team either scales the winning changes or re-runs research on unresolved friction.

How to connect research to weekly budget decisions

This point is where strong operators separate from busy teams.

A useful cadence is weekly, not quarterly. Review buyer signal and performance together. If one segment responds to new messaging and another keeps stalling, budget should move. If organic pages built around researched intent start attracting stronger-fit demand, SEO and content deserve more support. If paid campaigns attract volume but sales reports weak fit, spend should tighten.

That does not require dozens of dashboards. It requires one shared view of:

- target segment,

- message variant,

- channel,

- landing page,

- and downstream sales quality.

What to measure without inventing precision

You do not need fake certainty to manage research ROI. You need a clean decision framework.

Use research to answer:

- Did message-market fit improve?

- Did sales conversations become easier?

- Did one segment show stronger buying intent than another?

- Did certain objections decline after content and page updates?

- Did the handoff between marketing and sales get cleaner?

When possible, align the review around internal KPIs your business already trusts. Pipeline quality. Win-loss themes. Sales cycle friction. Channel contribution. Those are the measures that matter operationally.

Key takeaway: Research becomes revenue work only when a team gives it an owner, a deadline, and a budget consequence.

Where external support can make sense

Some teams can run this in-house. Others cannot because the bottleneck is not strategy. It is execution capacity, research discipline, or cross-channel coordination.

That is usually the primary in-house versus agency question. Not who has ideas, but who can move from insight to tested changes fast enough to matter this quarter. If your leadership team is weighing that trade-off, this breakdown of in-house vs agency marketing is a practical place to start.

A strong operating model is simple. Research feeds hypotheses. Hypotheses drive channel changes. Performance data determines what scales. Then the next sprint starts with better assumptions than the last one.

If your team needs help turning b to b market research into channel strategy, landing page changes, and measurable pipeline impact, Ezca Agency works with SaaS, e-commerce, and B2B brands in focused 90-day sprints across SEO, paid media, CRO, email, and content. The goal is not more reporting. It is faster decisions, tighter execution, and growth you can track.